› Forums › General Discussion › Starlink Satellites

- This topic has 29 replies, 9 voices, and was last updated 6 years, 12 months ago by

Dr Paul Leyland.

Dr Paul Leyland.

-

AuthorPosts

-

25 May 2019 at 9:28 pm #574332

Neil Morrison

ParticipantI have just seen a Video posted on Space weather.com this evening 25th May of the passage of the first 60 Star link Satellites . They are reported to have been shining at between second and and third magnitude. What on earth will our observing conditions deteriorate to when the full compliment of several thousand satellites are in place. ????

Neil Morrison

25 May 2019 at 11:20 pm #581075 Grant PrivettParticipant

Grant PrivettParticipantAre the TLEs on Heavens Above yet?

26 May 2019 at 7:01 am #581076 Nick JamesParticipant

Nick JamesParticipantCalsky has an amateur generated TLE for the train and will do predictions. Use Spacecom ID 99201. The TLEs are currently over a day old and the orbits will be evolving quite rapidly as each spacecraft uses its ion thruster to move to its operational configuration. The prospect of 12,000 of these things in LEO is indeed a concern for astronomers although there will be long periods when they are not illuminated.

It was cloudy in Essex last night but John Mason had clear skies for the low pass at 22:16 UTC and didn’t see anything.

Epoch is 24. May 2019 Starlink Trail1 99201U 19894A 19144.95562291 .00000000 00000-0 50000-4 0 022 99201 53.0084 171.3414 0001000 0.0000 72.1720 15.40507866 0626 May 2019 at 12:54 pm #581077 Grant PrivettParticipant

Grant PrivettParticipantThanks. Will give that a go when we get a clear night – though the forecast in Salisbury is cloudy for a most of the next 3 days.

Are they really ready for operations that fast that they are already ion driving? Thats a pretty quick shakedown.

26 May 2019 at 1:29 pm #581079 Nick JamesParticipant

Nick JamesParticipantNot good from our latitudes in the summer but another reason for imagers to use short subs and sigma clip stacking I guess. Not sure of the effect on surveys like LSST. It will be imaging only after the end of astro twilight and it is at latitude 30S so twilight will be much shorter. I guess someone could calculate the period of time during astro night when 500km high LEOs are illuminated from that site.

26 May 2019 at 1:39 pm #581078 Dr Paul LeylandParticipant

Dr Paul LeylandParticipantThey will be illuminated for up to 2 hours after sunset and before dawn. That’s all night at these latitudes at this time of year. Over on Twitter there is a great deal of discussion which includes amateur and professional astronomers.

https://twitter.com/doug_ellison/status/1132443682018226176 is a good place to start.

One person points out that when in a few years time the whole 12,000 constellation is in orbit, SST will be on stream and observing much of the sky every night. Even if spread out as far as possible there will be one Starlink satellite every 3.4 square degrees. The field of view of LSST is 9.3 square degrees. (Apologies for having 1.5 in the first version of this post).

It’s going to be a nightmare.

26 May 2019 at 1:43 pm #581080 Dr Paul LeylandParticipant

Dr Paul LeylandParticipantSigma clipping destroys photometric accuracy. If all I want is a pretty picture I tend to use median stacking but for scientifically useful images that’s only an option for astrometry.

I use 30s subs, so bad images can be discarded with out losing too much data, but need to use arithmetic mean co-addition for the great majority of my work.

26 May 2019 at 1:49 pm #581081 Grant PrivettParticipant

Grant PrivettParticipantThats interesting. Is there a paper you could refer me to on that?

26 May 2019 at 2:10 pm #581082 Nick JamesParticipant

Nick JamesParticipantI’m suprised by that. I use winsorized sigma clipping with dithering for all my stacks since I have planes, helicopters etc. to deal with in addition to satellites and dark frames are never perfect. I’ve never noticed any degradation in the photometric accuracy of my stacks using sigma clip compared to a simple mean. In fact I would have thought that it would be better since you are removing residual hot pixel outliers. As Grant says, it would be interesting to see a reference that discusses this.

26 May 2019 at 2:59 pm #581083 Dr Paul LeylandParticipant

Dr Paul LeylandParticipantA quick searcn turns up one for which I only have the abstract as the full text is behind a paywall.

Optimal Addition of Images for Detection and Photometry, Authors: Fischer, P ; Kochanski, G P, In: Astron. J. 107 (1994) 802-810

Abstract:

In this paper we describe weighting techniques used for the optimal coaddition of CCD frames with differing characteristics. Optimal means maximum signal-to-noise (s/n) for stellar objects. We derive formulae for four applications: 1) object detection via matched filter, 2) object detection identical to DAOFIND, 3) aperture photometry, and 4) ALLSTAR profile-fitting photometry. We have included examples involving 21 frames for which either the sky brightness or image resolution varied by a factor of three. The gains in s/n were modest for most of the examples, except for DAOFIND detection with varying image resolution which exhibited a substantial s/n increase. Even though the only consideration was maximizing s/n, the image resolution was seen to improve for most of the variable resolution examples. Also discussed are empirical fits for the weighting and the availability of the program, WEIGHT, used to generate the weighting for the individual frames. Finally, we include appendices describing the effects of clipping algorithms and a scheme for star/galaxy and cosmic ray/star discrimination.

Two much more recent papers I have read in full are https://arxiv.org/pdf/1512.06872.pdf and its sequel https://arxiv.org/abs/1512.06879 which specifically treat optimal co-addition.

26 May 2019 at 4:28 pm #581085 Grant PrivettParticipant

Grant PrivettParticipantThanks for that. I shall have a look at it. The appendices sound the interesting bit.

I have always felt people are careless with median stacking. Transparency changes can have a huge impact on the results – simply normalising the backgrounds isnt enough, normalisation of the signal received is necessary too if you want to do things thoroughly (so some sort of gain correction is necessary). I tend to avoid really long exposures and median stack images in sets of ~10 and then coadd all the resulting frames.

26 May 2019 at 4:52 pm #581087 Dr Paul LeylandParticipant

Dr Paul LeylandParticipantPlease let us know if you find a freely available copy of the first paper.

26 May 2019 at 5:09 pm #581086 Dr Paul LeylandParticipant

Dr Paul LeylandParticipantSee also https://en.wikipedia.org/wiki/Drizzle_(image_processing)

“Drizzle has the advantage of being able to handle images with essentially arbitrary shifts, rotations, and geometric distortion and, when given input images with proper associated weight maps, creates an optimal statistically summed image.” and “Results using the DRIZZLE command can be spectacular with amateur instruments.”

Richard Hook and I were at Oxford together. He recently retired from ESO and we’re in frequent email contact.

26 May 2019 at 6:04 pm #581088 Grant PrivettParticipant

Grant PrivettParticipantI’m curious as to how you make a weight map. Are they looking at the darks, flats and defect maps or doing something more sophisticated? Drizzle normally works best though with large numbers of oversampled images doesnt it?

What does that do to the photometry? Doesnt drizzle interpolate values with a bicubic spline or something?

26 May 2019 at 6:39 pm #581089 Peter MeadowsParticipant

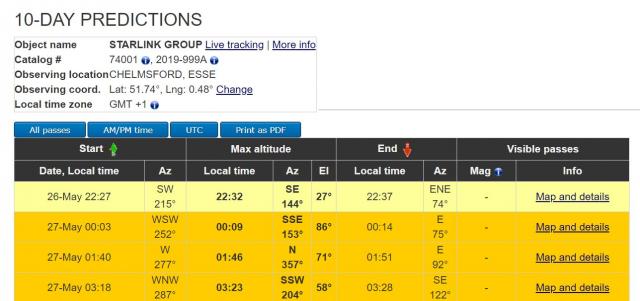

Peter MeadowsParticipantAccording to N2YO.com (https://www.n2yo.com/passes/?s=74001) there are three good passes over southern UK overnight such as the following from Chelmsford (note times are BST):

The most detailed information I’ve found on this constellation can be found at https://space.skyrocket.de/doc_sdat/starlink-v0-9.htm.

Peter

26 May 2019 at 7:09 pm #581090 Dr Paul LeylandParticipant

Dr Paul LeylandParticipantThere a number of ways. A common way is to estimate the background noise at each pixel after defect, dark and flat processing. A (relatively) simple way is to tile the image with a number of sub-images, (say 64×64 pixels by way of example) and compute the pixel histogram of each tile. Assuming that the majority of the pixels are sky and a minority are stars, discard the top 10% or so (which are presumably the stars) and fit a Gaussian to what remains (presumably the background). That Gaussian determines the sigma of the background at that point. Then interpolate (by whatever means, by a biquadratic fit, perhaps, or with cubic splines) to estimate the sigma at each pixel. The weight map is then 1/(sigma^2).

(Typo alert: undersampled to be precise…)

26 May 2019 at 7:14 pm #58109126 May 2019 at 8:45 pm #581092 Grant PrivettParticipant

Grant PrivettParticipantHells teeth!

26 May 2019 at 10:15 pm #581093 Grant PrivettParticipant

Grant PrivettParticipantHad a look at the last two papers. It all looks pretty straightforward – though the devil will be in the detail as they themselves admit, care is needed to ensure the implementation is robust against residual defects, cosmic rays and artefacts. Would be fairly easy (though fiddly) to implement in something like Python – shame some of the commercial packages go for the simple solutions only – its not as if CPU and memory is expensive anymore.

It was surprisingly familiar as I saw similar methods presented at an SPIE conference in 2003 or 2005 (cannot check as work went “smart working” a year or two back and lots of conference proceedings got thrown out when we lost book shelves) where there was a session on dim source tracking techniques.

Will have a look at the other paper on Tuesday and report back.

26 May 2019 at 11:10 pm #581096 Nick JamesParticipant

Nick JamesParticipantHad a video running for this evening’s 2130 UTC pass. The sky was bright and cloudy but I recorded 14 of the Starlink spacecraft. I was using a Sony A7s with an 85mm lens at f/1.4, 1/25s, ISO20000 pointed just left of Spica. The first one came along at the time predicted but the rest were strung out over around 3.5 mins. Limiting mag of the video was around 7.5. The spacecraft were all around mag 5.5.

-

AuthorPosts

- You must be logged in to reply to this topic.